Does your donor data belong to you? What nonprofit teams need to know about AI and data privacy

AI adoption in nonprofit fundraising is moving faster than the policy frameworks designed to govern it. That gap creates real risk, not from bad intentions, but from the reasonable assumption that a helpful tool is a safe one.

The most common version of the problem looks like this: a gift officer is preparing for a major donor call. They open a general-purpose AI tool, paste in the donor's giving history and interaction notes, and ask for a summary. It saves time, produces a reasonable output, and seems harmless. But the data they submitted, personal financial behavior, relationship context, and philanthropic motivations, may have just been processed by a commercial model that retains and learns from what it receives.

This isn't hypothetical, and it isn't a rare edge case. It's happening across the sector without organizational awareness or policy guidance.

Three things worth understanding clearly

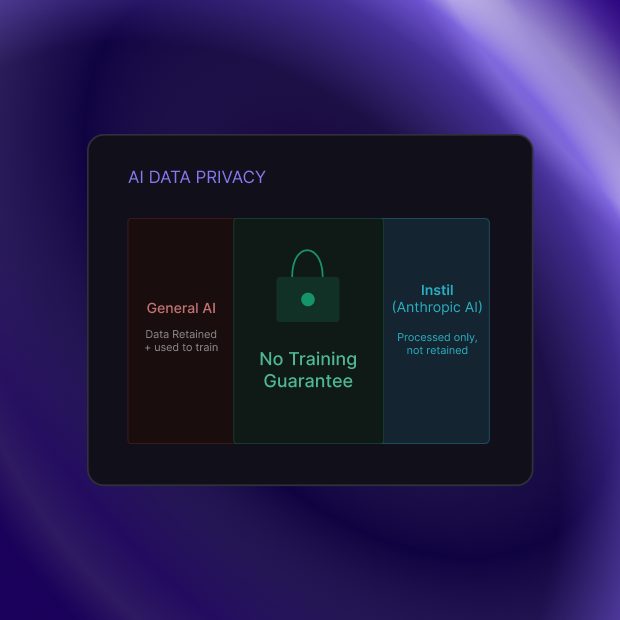

Most general-purpose AI tools train on what you send them

The default data handling policy for most free and consumer-tier AI products includes the use of submitted data to improve the model. That's how the products get better. It also means that donor records, interaction notes, and giving history submitted through these tools may be retained beyond the session and used in ways your privacy policy doesn't contemplate.

This isn't a criticism of those products; it's simply what they are. The question is whether they're the right tool for data that was shared with your organization under an implicit expectation of discretion.

No-policy environments create unmanaged exposure

A 2026 AFP survey found that nearly half of nonprofits have no formal AI use policy. Without one, staff make individual decisions about which tools to use and what data to share, and those decisions vary by person, by day, and by how much time is available to think carefully about it.

The exposure isn't intentional. It's the natural result of useful tools arriving faster than governance frameworks.

Vendor commitments vary significantly, and the difference matters

Not every AI vendor handles data the same way, and the distinctions aren't always easy to find in product documentation. The question worth asking any vendor is specific: Does your AI use data my organization submits to train or improve your underlying models? The answer should be in writing and unambiguous.

What thoughtful governance looks like

For major gifts teams, data governance around AI doesn't need to be complicated. Three things cover most of the ground:

A clear distinction between tool categories

A policy that distinguishes between general-purpose AI tools where donor data should not be submitted, and purpose-built nonprofit platforms with explicit no-training agreements, gives staff a usable decision framework rather than a blanket prohibition.

Awareness of why the distinction matters

The policy is only useful if the people making daily tool decisions understand the reasoning behind it. A brief team conversation about data handling, what goes where, and why is usually sufficient.

Vendor commitments in writing

For any AI tool that will process donor data, ask for the data handling commitment in a document, not just an FAQ. Look specifically for: no-training guarantees, data retention policies, and what happens to your data if you stop using the service.

How Instil approaches this

Instil's AI layer is built on Anthropic's commercial API. Anthropic's enterprise agreement includes an explicit no-training clause: data processed through the commercial API is not used to improve Anthropic's models. This is the same agreement that banks, law firms, and healthcare organizations rely on when they process sensitive client data through Anthropic's technology.

For Instil, this means: when a donor briefing is generated from your Salesforce or Blackbaud data, that data is used to produce the output and is not retained for model training. Your donors' information stays in your systems.

Donor trust is foundational to major gifts work. The expectation of discretion is part of what makes a donor willing to share the financial and personal context that makes major gifts conversations possible. Protecting that expectation, including in the tools your team uses, is worth the due diligence.

Want to understand how Instil handles your donor data? We walk through the data architecture in every demo plainly, without jargon.